Configuration¶

Dask-CHTC uses

Dask’s configuration system

for most configuration needs.

Dask stores configuration files in

YAML format

in the directory ~/.config/dask (where ~ means “your home directory”).

Any YAML files in this directory will be read by Dask when it starts up

and integrated into its runtime configuration.

Configuring Dask-CHTC¶

Dask-CHTC’s CHTCCluster is a type of Dask-Jobqueue cluster, so it is

configured through

Dask-Jobqueue’s configuration system.

This is the default configuration file included with Dask-CHTC:

jobqueue:

chtc:

# The internal name prefix for the Dask workers

name: dask-worker

# The HTCondor JobBatchName for the worker jobs.

batch-name: dask-worker

# Worker job resource requests and other options.

cores: 1 # Number of cores per worker job

gpus: null # Number of GPUs per worker job

memory: "2 GiB" # Amount of memory per worker job

disk: "10 GiB" # Amount of disk per worker job

processes: null # Number of Python processes per worker (null lets Dask decide)

# Whether to use GPULab machines.

gpu-lab: false

# What Docker image to use for the Dask worker jobs.

worker-image: "daskdev/dask:latest"

# Send HTCondor job log files to this directory

log-directory: null

# Extra command line arguments for the Dask worker.

extra: []

# Extra environment variables for the Dask worker.

env-extra: []

# Extra submit descriptors; not all are available because some are used internally.

job-extra: {}

# Extra options for the Dask scheduler

scheduler-options: {}

# Number of seconds to die after if the worker can not find a scheduler.

death-timeout: 60

# INTERNAL OPTIONS BELOW

# You probably don't need to change these!

# Directory to spill extra worker memory to (null lets Dask decide)

local-directory: null

# Controls the shebang of the job submit file that jobqueue will generate.

shebang: "#!/usr/bin/env condor_submit"

# Networking options.

interface: null

A copy of this file (with everything commented out) will be placed in

~/.config/dask/jobqueue-chtc.yaml the first time you run Dask-CHTC.

Options found in that file are used as defaults for the runtime arguments of

CHTCCluster and its parent classes in Dask-Jobqueue, starting with

dask_jobqueue.HTCondorCluster.

You can override any of them at runtime by passing different arguments to the

CHTCCluster constructor.

Dask-CHTC provides a command line tool to help inspect and edit its

configuration file. For full details, run dask-chtc config --help.

The subcommands of dask-chtc config will (among other things)

let you show the contents of the configuration file, open it in your editor,

and reset it to the package defaults.

Warning

Dask-CHTC is prototype software, and the names and meanings of configuration options are not necessarily stable. Be prepared to reset your configuration to track changes in Dask-CHTC!

Configuring the Dask JupyterLab Extension¶

The Dask JupyterLab extension

lets you view the Dask scheduler’s dashboard as part of your JupyterLab.

It can also be used to launch a Dask cluster.

To configure the cluster that it launches, you write a Dask configuration

file, typically stored at ~/.config/dask/labextension.yaml.

Here is an minimal configuration file for launching a CHTCCluster:

labextension:

factory:

module: 'dask_chtc'

class: 'CHTCCluster'

kwargs: {}

default:

workers: null

adapt: null

Configuration options set via ~/.config/dask/jobqueue-chtc.yaml will be

honored by the JupyterLab extension; note that you are specifying arguments

in the extension configuration file as if you were calling the

CHTCCluster constructor directly.

To connect to the cluster created by the lab extension, you must pass the appropriate security options through. First, get the security options:

from dask_chtc import CHTCCluster

sec = CHTCCluster.security()

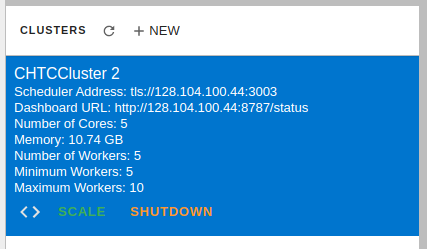

Then, (after creating a new cluster by clicking +NEW),

click the <> button to insert a cell with the right cluster address:

And modify it to use the security options

by adding the security keyword argument:

from dask.distributed import Client

client = Client("tls://128.104.100.44:3003", security=sec)

client